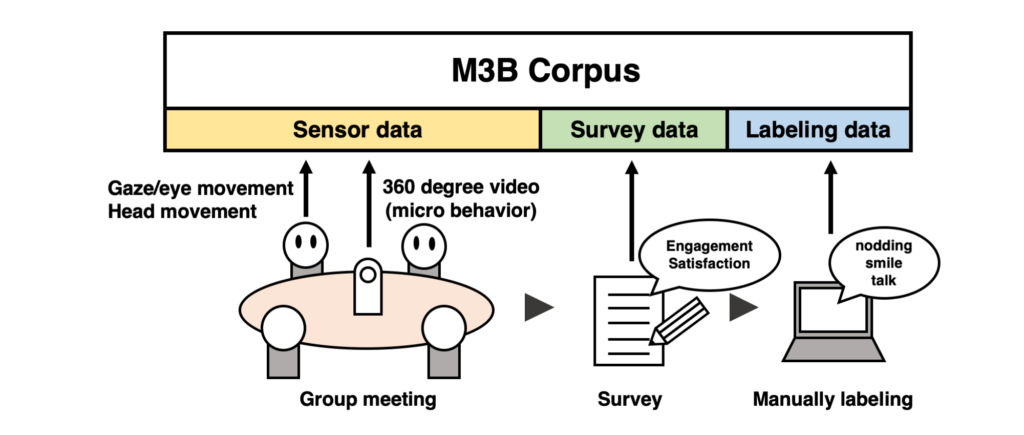

M3B (Multi-Modal Meeting Behavior) Corpus is the dataset that includes sensor data for Micro Behavior in group discussion, survey data about satisfaction and engagement, and labeling data for the gesture and behavior (nodding, talking, smile).

We removed or processed the data which identify individuals (face images and voice).

About ethics approval

The study received ethics approval (approval no: 2018-Ⅰ-28) after review by the research ethics committee at Nara Institute of Science and Technology.

Outline of Experiment

The flow of the experiment is as follows.

- 4 participants discuss the topic which is narrowed to 2 answers. The topic is simple and easy to participate fairly (ex.: Which do you like dogs or cats?, Which do you like summer or winter?)

- The discussion is finished, each participant answers the survey about satisfaction and engagement.

- Participants do 1 and 2 task two times, each participant labels their own behavior referring to the video.

Summary of the dataset

Detail of Experiment

The detail of the experiment is as follows.

- Period:

May 7th – Jun 5th, 2019 - Participants:

NAIST Ubiquitous Computing System Lab’s students

Male 17 (10 participated 2 times), Female 4 (2 participated 2 times) - Age:

22-25 years old - Native Language:

Japanese

It takes 120 hours to make the dataset. Most of it was spent on labeling work.

About Sensor

The following three types of sensors are used.

RICOH THETA V

Record micro behaviors as 360-degree video.

Converted to point and vector features with OpenFace. The background is black and removed faces and voices.

About performance

・4K Quality

・360-degree camera

・29.97fps

Pupil Labs Eye Tracker

Record the eye movements of the wearers as a video and output the CSV files.

We remove the world videos because they include face images. We will add the videos converted by OpenFace.

LPMS-B2

It is IMU which includes the 3-axis acceleration sensor and 3-axis gyro sensor.

Fixed to the grip of Pupil Labs and record the acceleration and gyro data of each participant’s head as CSV.

Some data is broken because of sensor malfunction. Please check the text in the corpus.

About Survey

After the discussion, each participant answered the surveys about the change of opinion, engagement and so on. The answer point is 1 to 5.

| ID | Description |

| A1 | Before the discussion, is your opinion the former(1) or the latter(5)? |

| A2 | After the discussion, is your opinion the former(1) or the latter(5)? |

1 means “disagree”, 5 means “agree”, 3 means “neutral”.

| ID | Description |

| B1 | I was satisfied with the discussion. |

| B2 | I could talk my own opinion. |

| B3 | I heard what people with the same opinion say. |

| B4 | I heard what people with the opposite opinion say. |

| B5 | The discussion was enjoyable. |

| B6 | The group often cut off my speech. |

| B7 | I would like to have a discussion with this group in the future. |

| B8 | I have an attention for the 360-degree camera. |

About Labeling

Each participant labeled their own behaviors for head and face. The contents of labeling are as shown in the table below. At that time, they also labeled the reason for the gesture.

| Label name | Category | Detail |

| smile | response | without special meaning generated by the listener |

| agree | smile with consent | |

| interesting | smile occurs when the discussion is interesting | |

| sympathy | seeking empathy | |

| nodding | response | without special meaning generated by the listener |

| agree | nodding with consent | |

| talk | description | explain his/her opinion |

| objection | deny the opinion of the other | |

| agree | agree with the other opinion | |

| say | give the right to speak to an other person |

Terms of use

You can use this data set for non-profit and research purposes only. When presenting research results using datasets at academic conferences, please cite the following paper:

Yusuke Soneda, Yuki Matsuda, Yutaka Arakawa, Keiichi Yasumoto: “M3B Corpus: Multi-Modal Meeting Behavior Corpus for Group Meeting Assessment ”, UbiComp/ISWC ’19 Adjunct, September 9–13, 2019, London, United Kingdom.

Contact about Corpus

For more detail, please contact yukimat [at] is.naist.jp (NAIST Assist. Prof. Yuki Matsuda).

Data provider

M3B Corpus is the result of joint research between the Kyushu University Humanophilic System Laboratory (Yutaka Arakawa Laboratory) and the Nara Institute of Science and Technology Ubiquitous Computing System Laboratory (Keiichi Yasumoto Laboratory).